AI-driven Enterprise IoT - One engine: Connect, Transform, Analyze, Act.

Flex83 eliminates snowballing complexity. The only unified data processing engine built for both predictive and generative AI. Cortex, the platform-native AI copilot, guides every implementation step.

Trusted by teams at global enterprises

for industrial data and asset ecosystem

proven at enterprise scale

vs. build-from-scratch programs

AWS, Azure, GCP, private cloud, or edge

one platform, one contract

Flex83 Platform. Every Layer Covered.

Flex83 is structured as a complete implementation workflow, from connecting your first data source to deploying AI across millions of devices. Cortex is the platform-native AI copilot that knows every component deeply: describe where you are, and it guides your next implementation step — or executes it for you.

.png)

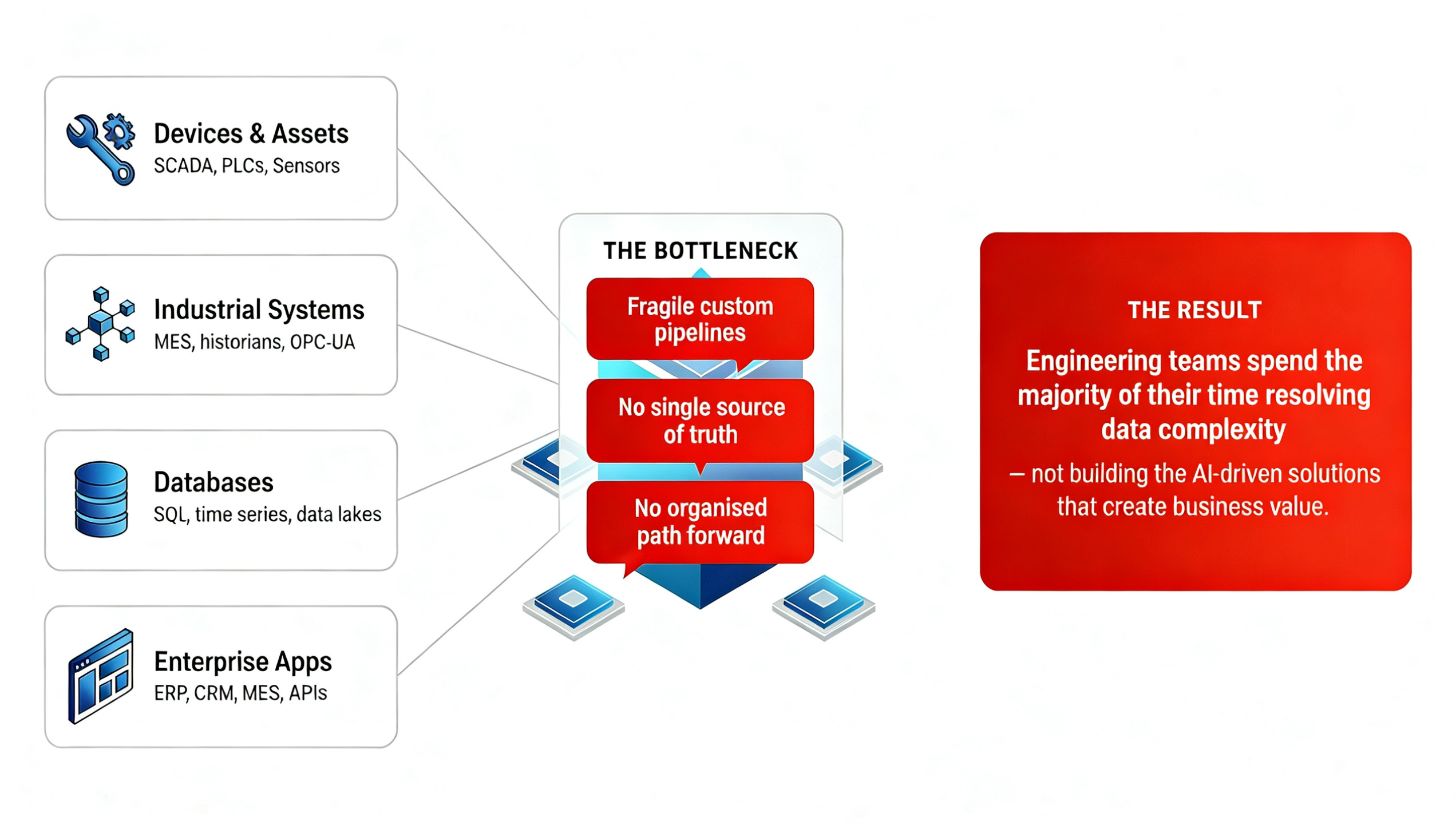

The Problem: Fragmented data, disconnected systems, and complexity

Launching your digital solutions portfolio shouldn’t mean stitching together a software company from scratch.

The Solution: A unified workflow where every layer works together — from first connection to AI-driven action.

Six capability layers. Each one delivers something your program needs — and removes something that has been costing you. Not in theory. As measurable outcomes.

Data Connectivity

Any device, any system, any protocol — connected without custom code.

Data Governance

Any data becomes business-ready context — automatically, at the source.

Data Processing

Combine siloed data into valuable data collections and analytics.

Data Analysis

From raw data to insight — without building a backend for every question.

Data Visualization

Turn unified data into dashboards, widgets, and embedded analytics — without building a custom UI layer.

LLM Context Creation

Your asset data, made readable by AI agents — for root cause analysis and natural language action.

Your Industrial IoT Journey at Scale

End-to-end data pipeline with baked-in governance and scale-ready asset management, connected business solutions, plus more.

Build Your Data Foundation

Single source of truth for all asset telemetry, events, and metadata. Protocol-agnostic ingestion from edge to cloud.

Unified data lake & lineage

Edge-to-cloud connectors

Quality & governance built in

Add Intelligence via Pipelining

Turn raw telemetry into actionable insights — real-time stream processing, AI/ML orchestration, and analytics pipelines.

Stream & batch processing

AI/ML orchestration

Real-time dashboards

Launch Multiple Applications on the Platform

Ship vertical solutions and custom apps by consuming catalog APIs and pre-built modules. White-label ready.

Catalog API consumption

White-label branding

Custom app development

Not a feature checklist.

A comparison of what you actually experience.

Building and operating an industrial AI and IoT program looks very different depending on your foundation.

Directional assessment based on IoT83 deployment and customer experience. Explore full product →

Multi-tenancy

New customer in days — workspace isolation, custom branding, and RBAC built in from day one

Weeks of custom provisioning, isolation engineering, and access control work per customer

AI capabilities

ML Studio, LLM/RAG, and Cortex — the AI copilot. Two AI systems, one platform.

Separate ML platform + custom pipelines + inference endpoint engineering for each AI system

Scale and governance

Same architecture from pilot to 83M devices — with 9,300+ audit entries and workspace isolation built in

Re-architecture inevitable at scale — governance added later as a compliance project

Cloud agnostic

Any cloud, any infra — Kubernetes-native. Deploy anywhere without touching application code

Locked to vendor cloud — migration is a multi-year re-platforming effort

IP ownership

AIoT Solution Suite delivered as source code — your logic, your brand, your IP

Object code / SaaS only — you own the configuration, not the logic

Pricing model

Flat workspace-based — no per-device metering, no per-message fees. Cost is predictable at any scale

Per-device, per-message, per-service — costs compound as your program grows

An AIoT platform should provide capabilities

- and eliminate problems.

Connect

Data & IoT — any source

Transform

Stream & batch — at scale

.png)

AI Intelligence

Predictive & agentic

Security & Governance

Inherited, not engineered

Widegts, Viz & Catalog

Build once, deploy anywhere

Multi-tenancy

New customers in days

Every source. Zero custom code.

Protocol-agnostic ingestion from any OT/IT source — MQTT, OPC-UA, Modbus, ERP APIs, databases, cloud event hubs. The Asset Handler and Ontology layer transform raw payloads into semantically rich objects. Add a new device type in days. 70+ connectors. 87 configurable parameters per asset type.

6-step UI wizard. Cloud event hubs, ERP APIs, databases, MQTT, FTP, raw Kafka topics. Ontology-based transform.

Real-time and historical. One platform.

Apache Flink at sub-200ms latency for continuous real-time stream processing — alerting, anomaly detection, complex windowing. Spark and Airflow for historical batch jobs, ML training, and multi-stage transformations. SQL IDE with Catalog API: save a query, get a stable JSON endpoint. No backend engineer required.

Flink SQL or custom JARs. Spark operator auto-scales. Browser-based SQL IDE — queries become stable POST endpoints.

Two AI systems. Purpose-built pipelines for both. And an AI copilot to guide your development.

ML Studio powers your predictive AI — profiling, cleaning, training, and inference on live platform data, with windowed features that reduce ML compute load and increase model accuracy. LLM / RAG handles your generative AI — semantic summaries, root cause analysis, and natural language querying on your asset data, without hallucination. Cortex is the platform-native AI copilot that knows every layer: describe where you are in your implementation and it guides your next step — or executes it for you.

ML Studio: profiling → cleaning → training → inference. Cortex: multi-agent natural language interface. Separate optimised pipelines for ML and LLM.

Enterprise security posture from day one.

Multi-workspace isolation, workspace-scoped API keys with domain whitelisting, and 9,300+ audit log entries in production. SonarQube + Mend dependency scanning across ~1,100 open-source libraries, automated every release. RBAC and IAM at both platform and application layers. TLS everywhere. Penetration tested.

Continuous scanning across ~1,100 OSS libraries. RBAC and IAM at platform and application layers. 400+ GB monitored in production.

Dashboards without backend engineering.

Pre-built, data-connected UI components that embed in any web application. Administrators configure which widget displays what data for which tenant — no code, no backend work. The same widget code serves different tenants with different data contexts. Saved SQL queries become stable Catalog API endpoints.

Widget Catalog API. PostgreSQL + Redis. Dashboard API maps layouts to users and roles. Saved queries become stable POST endpoints.

Complete tenant isolation. Zero engineering per customer.

Complete multi-tenant workspace architecture — customer isolation, custom branding per tenant, role-based access scoped per workspace, tenant-specific data separation. Onboard a new customer in days with a configuration-driven workflow. Each tenant gets their own API keys, branded experience, and data context.

Multi-tenant workspace architecture. Customer-scoped API keys, data isolation, and branded dashboards — all configuration-driven.

Custom connector engineering

Writing, testing, and maintaining custom connectors for each data source, custom parsers for every device protocol, home-grown device registries, and schema translation layers. On a typical enterprise program this accounts for 50,000–100,000 lines of infrastructure code before a single line of business logic is written.

DIY streaming and batch infrastructure

Provisioning and managing Flink or Spark clusters, writing and debugging Airflow DAGs, monitoring distributed job failures, and hand-coding API endpoints for every KPI. Most teams spend months on infrastructure before writing any business logic.

Months of ML infrastructure — and navigating implementation alone

Stitching together separate ML platforms, building custom data pipelines to feed them, managing model versioning, and maintaining inference endpoints — all before a single business outcome. A second set of pipelines for your LLM layer. And figuring out the implementation sequence yourself — documentation-diving for every step, with no platform knowledge to draw on.

Security and compliance engineering from scratch

Building workspace isolation, access control systems, API key management, and audit logging from scratch. Auditing open-source dependencies for CVEs, managing TLS certificate lifecycles, commissioning penetration tests. Without it, every new enterprise customer triggers a compliance review that delays go-live by weeks.

Custom UI layer and hand-coded API endpoints

Building and maintaining a custom charting and dashboard layer. Hand-coding API endpoints for every KPI or widget. Re-wiring data bindings every time a schema changes. This resurfaces with every new customer, every new integration, every schema evolution.

Per-customer platform engineering

Engineering workspace isolation from scratch for every new customer. Building custom branding systems, per-tenant access control, data separation, and customer-specific configuration management. Without built-in multi-tenancy, every new enterprise customer is a platform engineering project.

Connect

Data & IoT — any source

Every source. Zero custom code.

Protocol-agnostic ingestion from any OT/IT source — MQTT, OPC-UA, Modbus, ERP APIs, databases, cloud event hubs. The Asset Handler and Ontology layer transform raw payloads into semantically rich objects. Add a new device type in days. 70+ connectors. 87 configurable parameters per asset type.

6-step UI wizard. Cloud event hubs, ERP APIs, databases, MQTT, FTP, raw Kafka topics. Ontology-based transform.

Custom connector engineering

Writing, testing, and maintaining custom connectors for each data source, custom parsers for every device protocol, home-grown device registries, and schema translation layers. On a typical enterprise program this accounts for 50,000–100,000 lines of infrastructure code before a single line of business logic is written.

Transform

Stream & batch — at scale

Real-time and historical. One platform.

Apache Flink at sub-200ms latency for continuous real-time stream processing — alerting, anomaly detection, complex windowing. Spark and Airflow for historical batch jobs, ML training, and multi-stage transformations. SQL IDE with Catalog API: save a query, get a stable JSON endpoint. No backend engineer required.

Flink SQL or custom JARs. Spark operator auto-scales. Browser-based SQL IDE — queries become stable POST endpoints.

DIY streaming and batch infrastructure

Provisioning and managing Flink or Spark clusters, writing and debugging Airflow DAGs, monitoring distributed job failures, and hand-coding API endpoints for every KPI. Most teams spend months on infrastructure before writing any business logic.

.png)

AI Intelligence

Predictive & agentic

Two AI systems. Purpose-built pipelines for both. And an AI copilot to guide your development.

ML Studio powers your predictive AI — profiling, cleaning, training, and inference on live platform data, with windowed features that reduce ML compute load and increase model accuracy. LLM / RAG handles your generative AI — semantic summaries, root cause analysis, and natural language querying on your asset data, without hallucination. Cortex is the platform-native AI copilot that knows every layer: describe where you are in your implementation and it guides your next step — or executes it for you.

ML Studio: profiling → cleaning → training → inference. Cortex: multi-agent natural language interface. Separate optimised pipelines for ML and LLM.

Months of ML infrastructure — and navigating implementation alone

Stitching together separate ML platforms, building custom data pipelines to feed them, managing model versioning, and maintaining inference endpoints — all before a single business outcome. A second set of pipelines for your LLM layer. And figuring out the implementation sequence yourself — documentation-diving for every step, with no platform knowledge to draw on.

Security & Governance

Inherited, not engineered

Enterprise security posture from day one.

Multi-workspace isolation, workspace-scoped API keys with domain whitelisting, and 9,300+ audit log entries in production. SonarQube + Mend dependency scanning across ~1,100 open-source libraries, automated every release. RBAC and IAM at both platform and application layers. TLS everywhere. Penetration tested.

Continuous scanning across ~1,100 OSS libraries. RBAC and IAM at platform and application layers. 400+ GB monitored in production.

Security and compliance engineering from scratch

Building workspace isolation, access control systems, API key management, and audit logging from scratch. Auditing open-source dependencies for CVEs, managing TLS certificate lifecycles, commissioning penetration tests. Without it, every new enterprise customer triggers a compliance review that delays go-live by weeks.

Widgets, Viz & Catalog

Build once, deploy anywhere

Dashboards without backend engineering.

Pre-built, data-connected UI components that embed in any web application. Administrators configure which widget displays what data for which tenant — no code, no backend work. The same widget code serves different tenants with different data contexts. Saved SQL queries become stable Catalog API endpoints.

Widget Catalog API. PostgreSQL + Redis. Dashboard API maps layouts to users and roles. Saved queries become stable POST endpoints.

Custom UI layer and hand-coded API endpoints

Building and maintaining a custom charting and dashboard layer. Hand-coding API endpoints for every KPI or widget. Re-wiring data bindings every time a schema changes. This resurfaces with every new customer, every new integration, every schema evolution.

.png)

Multi-Tenancy

New customers in days

Complete tenant isolation. Zero engineering per customer.

Complete multi-tenant workspace architecture — customer isolation, custom branding per tenant, role-based access scoped per workspace, tenant-specific data separation. Onboard a new customer in days with a configuration-driven workflow. Each tenant gets their own API keys, branded experience, and data context.

Multi-tenant workspace architecture. Customer-scoped API keys, data isolation, and branded dashboards — all configuration-driven.

Per-customer platform engineering

Engineering workspace isolation from scratch for every new customer. Building custom branding systems, per-tenant access control, data separation, and customer-specific configuration management. Without built-in multi-tenancy, every new enterprise customer is a platform engineering project.

The proof is in execution and results:

Your Digital Solutions Team

Without Flex83

With Flex83

From 20% to 70% of engineering on product. We consistently see this shift at Month 18 across telecom, manufacturing, and semiconductor programs built on Flex83 vs. custom-built architectures.

Niclas Anderson

VP Sales, Vitronic

Two Deliverables. Clear Ownership.

A licensed platform and a source-code solution — different licensing, different IP, one integrated stack.

AIoT Platform

- Device connectivity (70+ connectors)

- Stream & batch processing

- AI/ML engine with BYOM support

- Widget catalog & dashboard framework

- Platform APIs (Data, AI/ML, Catalog)

AIoT Solution Suite

- IAM & Role Based Access Control

- Asset Ontology & type definitions

- Widget & dashboard composition

- Multi-tenancy & tenant configuration

- Application provisioning per customer

.svg)